It has been about three months ago since I last wrote my blogpost ‘How to Become an Amateur Biologist 4’. I have been travelling, through Panama, Costa Rica, Nicaragua and Ireland. The latter being a bit of an outlier, but you do everything to see your foremost favourite artist, in my case Theo Katzman. And now, I am sitting here back in my student room in Leiden in the Netherlands, where I just started uni last week again. Back to studying, back to routine and back to the lovely Dutch weather. I wanted to write one last blogpost for Dinalab. To update you on the finishing of my project, but also to reflect on what I have learned at Dinalab, in Gamboa as a certified (self-named ;p) amateur biologist.

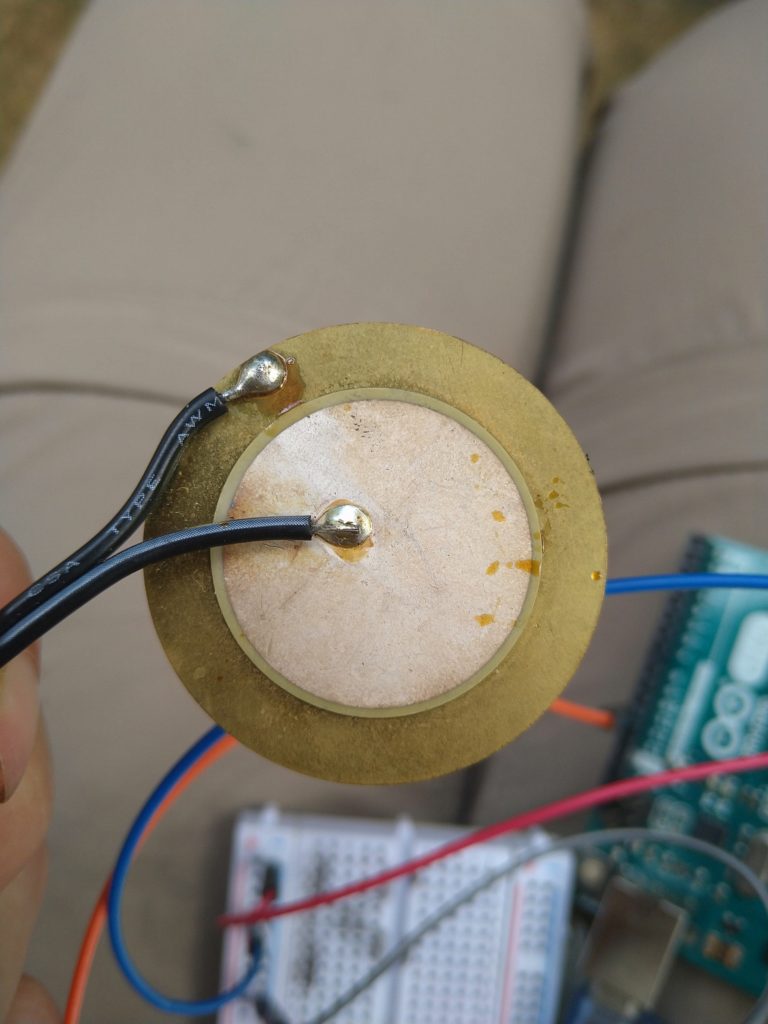

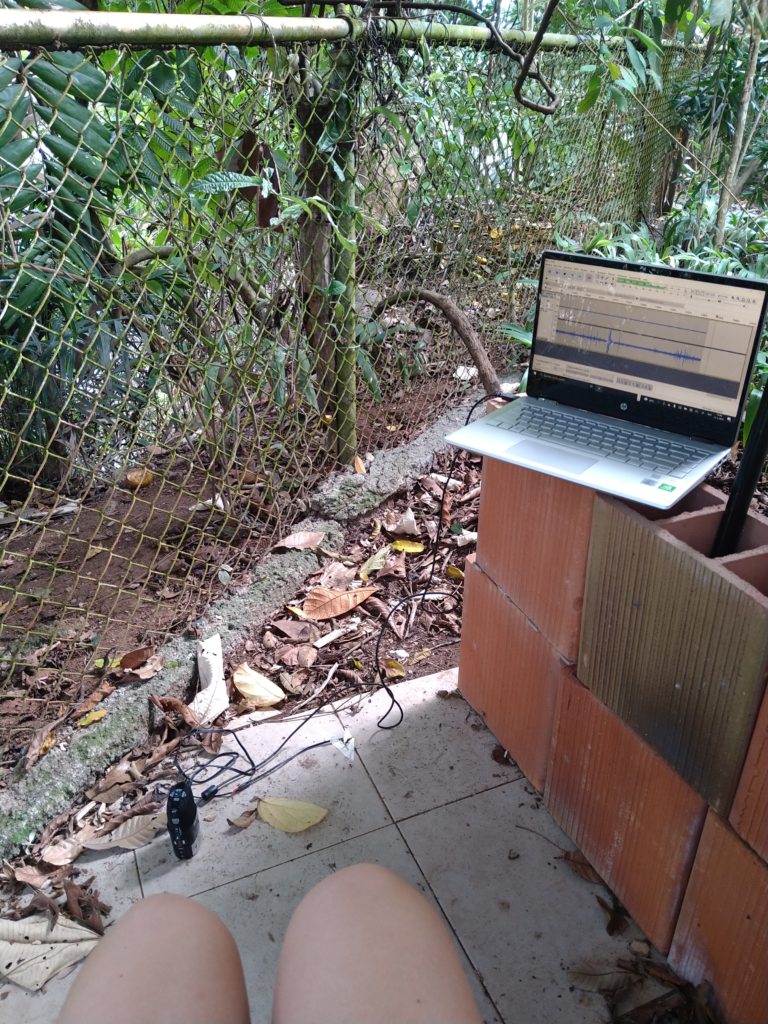

Eventually, I ended up making boots (using Andy’s massive old boots), where people could experience what it is like to ‘hear sounds’, or better said perceive vibrations, like a leafcutter ant does. For the boot, I created these ‘legwarmers’ where I sewed into two vibration motors (two in each legwarmer; four in total). In Arduino I wrote a piece of code where the vibration motors outputted data from audio recordings of leafcutters, cutting and foraging leaves. The audio recordings were transposed into numerical values, so the vibrators would vibrate more intense when the values from the audio recordings were higher. These values are abstracted from the amplitude of the audio recording (which is also influenced by pitch in the audio file). However, I wanted to make the experience a little bit more interesting and let all of the four vibrators vibrate at different times and different intensities and speeds. The audio recording consists of multiple ants making ‘sound’, so by letting each vibrator vibrate differently I wanted to recreate the effect of individual ants producing these vibrations/sounds.

I named my project ‘SignificAnt’. Ants are often seen as pests, as little, insignificant creatures. However, ants, or in this case leafcutter ants, have a very important function relating to decomposition, soil health and other basic ecological functions. So, the name refers to the important and unmissable role that leafcutter ants have and play in the ecosystem. Besides the meaning of ‘significant’ and the clearly recognizable ‘ant’, ‘sign’ is also an important part of the word. Since the concept ‘sign’ plays an important role in Jakob von Uexküll’s Umwelt, the name seemed to fit perfectly.

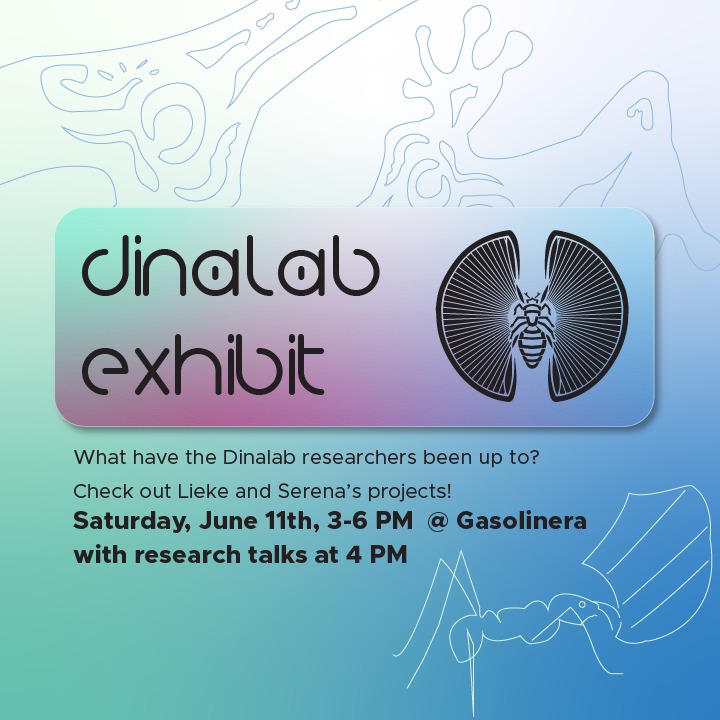

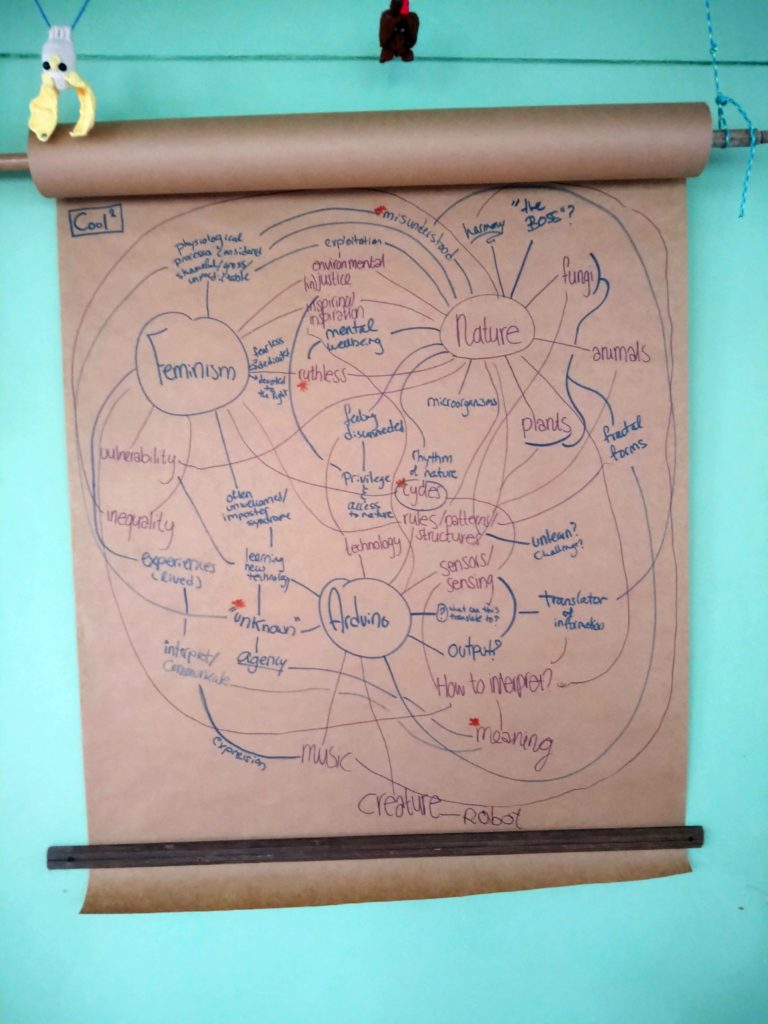

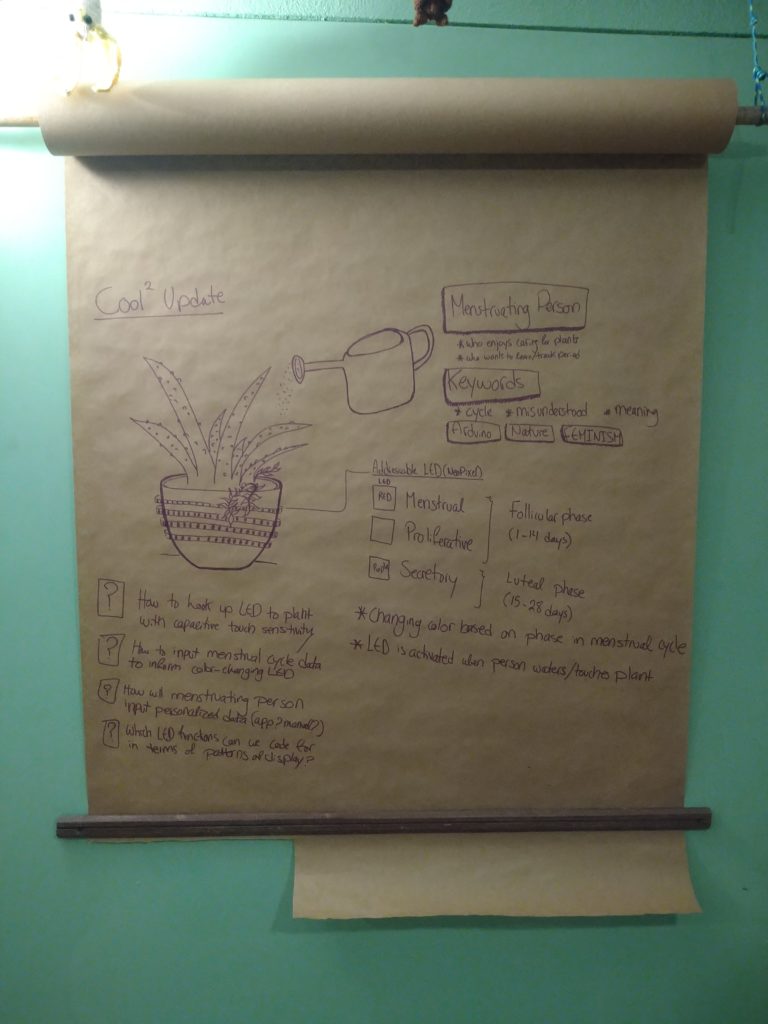

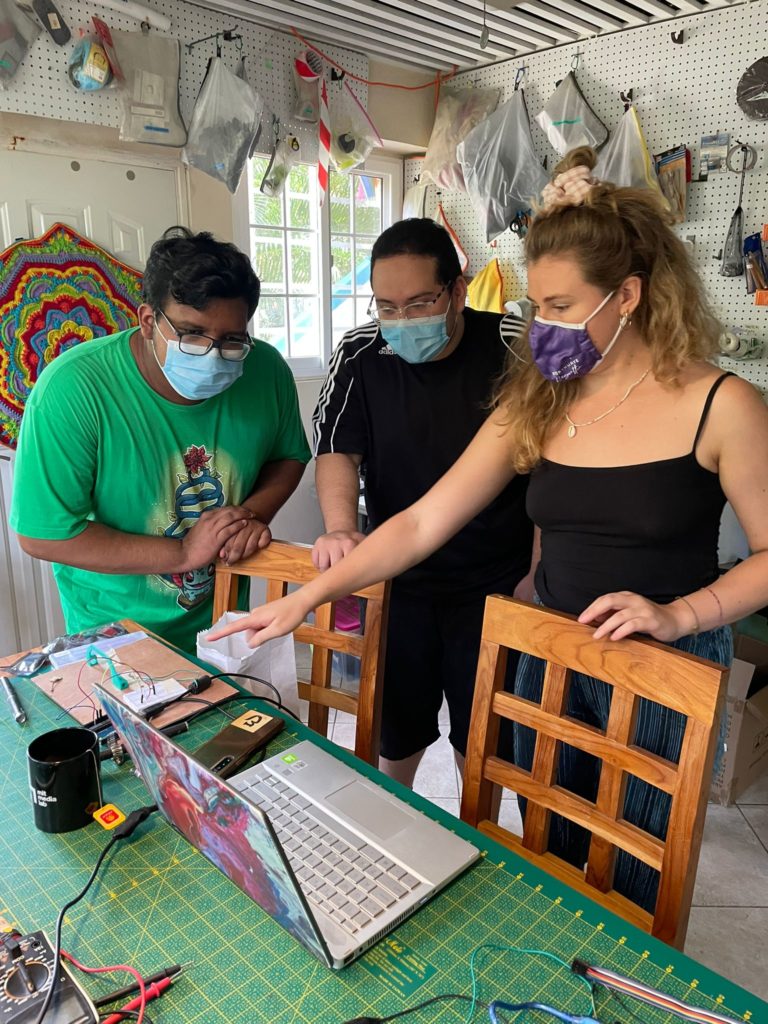

I could test out the Significant boots on the first (what an honour!) Dinalab exhibit, which took place on the 11th of June. The week before the exhibition Serena and I have been working incredibly hard, until the late hours, being sleep deprived, eating poorly, but also laughing incredibly hard together. Serena’s delicious tea and listening to Theo Katzman kept us sane. The exhibit was a big success; many people showed up and showed genuine interest. We both presented our own individual projects and our menstruation plant, Andy and Kitty sold lots of keychains for the APPC (including my designs 😊) and lots of people tried out my boots and asked interesting questions. In the evening, me, Josie and Finn organised a goodbye/birthday party and it was the perfect ending to a very fun, but exhausting day.

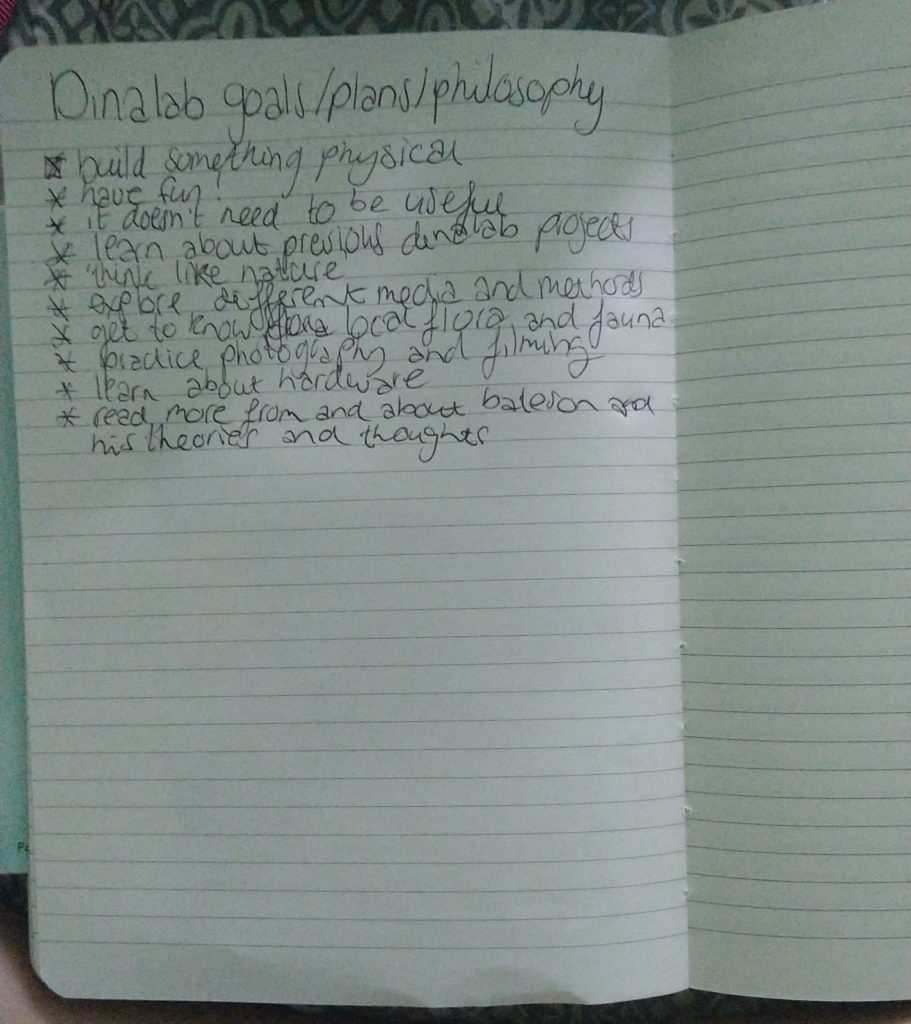

As the name of the title suggests, in this post I am going to tell you how I became an amateur biologist now. Before going to Dinalab I wrote down some goals and philosophy for my stay there. I will further elaborate on them below.

The goal of the project, or in general for my stay at Dinalab, was firstly to make something physical, as in a physical, tangible product. I am a person that very much lives in my head. I think a lot, so I have a lot of ideas and thoughts about things, however I have great difficulty with bringing out these ideas and thoughts out into the world. Although, it was still super hard and challenging for me to go from a framework, to a question, to a concept and eventually to the actual product, I am glad to say that I did it!!! I learned so much from the whole process. Andy helped me out a lot, which I am very grateful for. So I am happy to check this foremost important goal of my list!

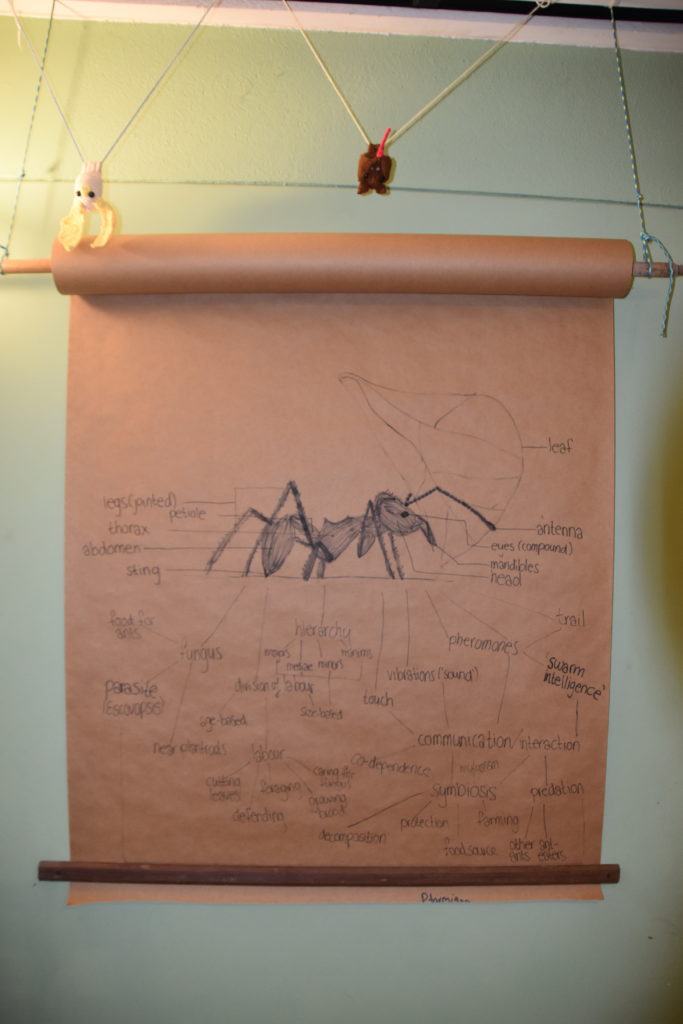

Secondly, think like nature, was another goal. Von Uexküll’s paper is all about this. Studying organisms in its own environment, in its own small little ecosystem, in its own respect. In order to be able to this, you have to think like nature, understand everything that goes on in the world of your subject of study. Although this is actually not very feasible, since it takes a lifetime to understand an organism fully in its own context. Nevertheless, I feel I have been come a little bit closer to thinking like nature, by looking at the leafcutter ants at work day in, day out. I also see ‘think like nature’ more as a philosophy, instead of a plan per se.

One other important goal that I had was to have fun! School and university sometimes makes you lose contact with the having fun part of studying and discovering the world, since the system is based on deadlines, performance and grades. However, this was also harder than expected, since I am a major perfectionist and my project is part of my Master’s degree and gets evaluated. Together with Andy, we made a very basic ‘Ant Gate’ which could detect ants walking through the gate. Luckily, besides having fun making stuff, I also got to have lots of fun with my dearest lab buddy Serena, dancing around Dinalab, singing our souls out, getting angry at the code of our project and catching up on the hot goss of Gamboa. Andy was my partner in crime mindwise, with the same crazy ideas and thoughts about the world, and Kitty was my partner in crime at APPC talking about how cute and funny(looking) sloths are and bashing people of the hotel.

Another goal, although a very basic one, was to get to know and learn about local flora and fauna. I learned so much about different kinds of animals and plants from scientists from the Smithsonian, local people and Kitty and Andy. For example, I learned so much about bats, butterflies, birds, frogs, plants and trees, ants and about lots of other big and small creatures.

And with all this new knowledge comes a new sense of curiosity. Nature keeps on surprising and amazing me and being in the jungle this all became more explicit. It brought me so much inspiration and new interests, and even more questions than answers. I hope to bring this home to the Netherlands. Although the environment is very different, I hope to continue to stay curious, adventurous, open-minded and to be respectful of nature and all its living beings, which in the end, are, for me, the most important character traits of a (amateur) biologist.

Unfortunately, a few days after the Dinalab exhibit my stay was coming to an end and I needed to start getting ready for my big backpacking trip; cleaning up Dinalab and saying goodbye to my dearest Gamboa friends and family. These last days were really stressful and emotional and I had a really hard time saying goodbye to Gamboa, the people, the nature and the animals.

My experience at Dinalab has been beyond my expectations. Andy and Kitty’s hospitality will continue to amaze me, especially considering I have been there for four months and the lines between work and personal life can get blurry. I have met the most amazing people, who have become, still are and will be my friends from Gamboa for life. Also, working at APPC has taught me so much about sloths and the other animals living there. They do incredibly good work and I am very happy to have been part of a team where people care so much about animals and nature. Luckily, there is still so much good in the world 🙂

Thanks to everyone that made my stay at Dinalab and in Gamboa in general so memorable. Thanks for all the help, fun, support, knowledge and crazy adventures. And an extra big shout out to Andy and Kitty, thanks for making me feel so welcome and at home. Thanks for teaching me so many cool new fun facts and skills. And lastly, thank you for letting me mess up Dinalab, using all the cool machines and eating your pizza 😉

Can’t believe that this was my last blog post from my stay at Dinalab (at least for now ;)) I really hope to get back to Gamboa, because I have had the time of my life there! Untill next time. Love you all <3

With lots of love,

Lieke

Amateur biologist 😉